A visual studio for composing Midjourney prompts

What if, instead of guessing which words Midjourney understands, you could pick them from a visual library?

Every Midjourney user hits the same wall. You describe what you want in plain English, and the model produces something that’s technically related but not quite the image you had in mind. The gap is rarely about what you wanted. It’s about how you phrased it. Midjourney responds better to specific combinations of words. Not single descriptors, but compound phrases that carry visual weight. Cinematic is a gesture. Cinematic film still with directional volumetric lighting is an instruction the model knows how to follow.

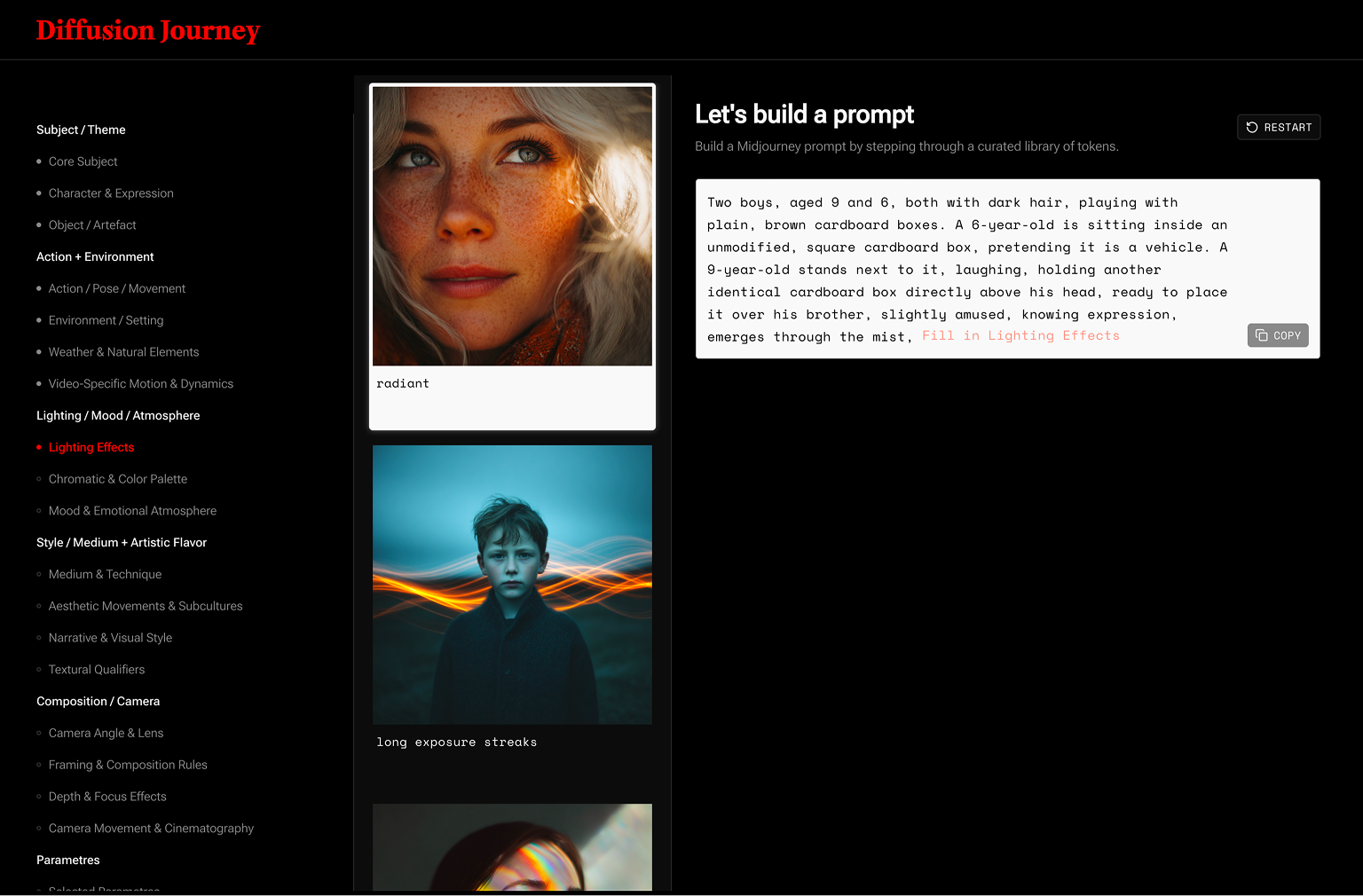

Diffusion Journey starts from this insight: prompt-writing is a vocabulary problem, not a creativity problem. At its core is a curated database of tokens, organised in the same sequence Midjourney processes best: moving from “character and expression” through “environment and lighting”, all the way to “camera angle and depth of field”.

Every token has been verified against a generated image. An automated pipeline runs each one through the model and captures the result, so the visual reference you see when browsing is the actual output, not a guess. The sequence matters too. A strong token in the wrong position pulls against the ones around it. In the right order, they compound.

The app walks you through every category in sequence. At each step, you browse tokens alongside their reference images, pick one, or type your own. The one thing that stays entirely open is the Core Subject. The starting idea is always yours, unguided. Every other compositional variable is supported. At the end, you set your parameters: aspect ratio, version, style weight. The prompt assembles itself. Ready to run.

The starting point is always a sentence. Not a prompt. Just the thing you want to picture. Take something ordinary. Two kids playing with cardboard boxes. The kind of idea that comes from life, not a brief. You type the core subject and move into the sequence: character expression, setting, light, camera angle. At each step you decide whether to shape that variable or let the model fill it in. By the end, twenty small decisions have become a single prompt that describes not just what’s in the frame, but how it feels.

Below is the prompt from this session, and the image it produced.

two brothers, aged 9 and 6, with dark hair playing with cardboard boxes, mischievous grin, identical unmodified square brown cardboard boxes, the standing 9-year-old mid-prank lowering a cardboard box over his unsuspecting seated younger brother, warehouse interior, soft directional window light with crisp shadows, editorial photo, low angle shot —ar 4:5 —v 8.1

Let’s take another scenario. One that starts like a film premise rather than a memory.

Apes occupying the Acropolis. A cinematic premise with no real-world reference to pull from. This is where the sequence does its heaviest lifting. Without a structured vocabulary, you’d be guessing at descriptors and iterating blind. With Diffusion Journey, the cinematic grammar is already in the token set. You’re directing, not guessing.

Below is the prompt from this session, and the image it produced.

Small platoon of approximately twelve armed apes, stoic battle-hardened resolve, detailed gear armor and weapons on each individual, standing in a tight defensive cluster formation, Parthenon on the Acropolis hill atop a foggy cliff, drifting mist, silent stillness, cinematic film still, layered foreground-midground-background composition with a ring of apes encircling the distant Parthenon in the midground, drone view —raw —ar 16:9 —v 8.1

Key Features

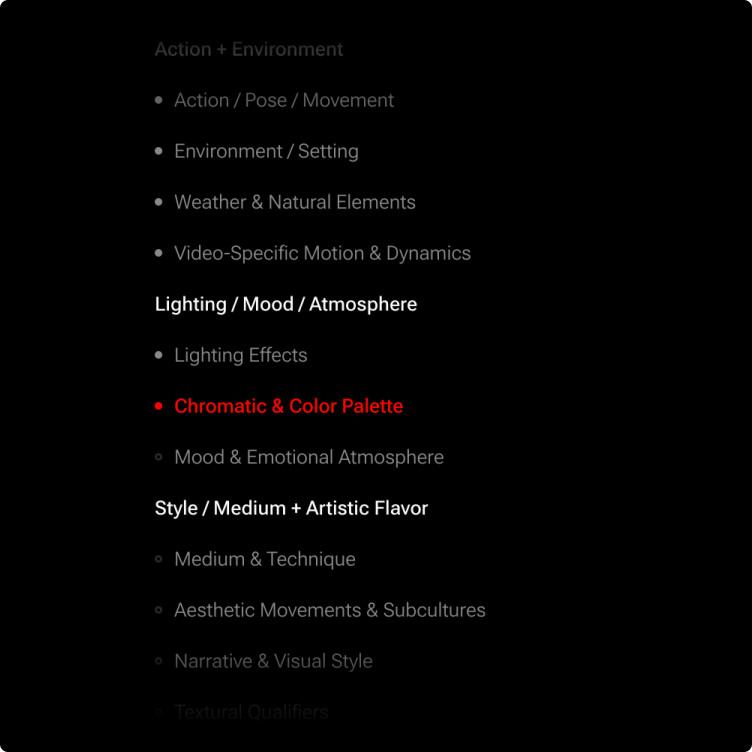

The Sequence

Diffusion Journey follows the same logic Midjourney uses to read a prompt: subject first, then action and environment, then light and mood, then style and camera. Moving through categories in order isn’t a constraint. It’s how you avoid the gaps that make a prompt fall short.

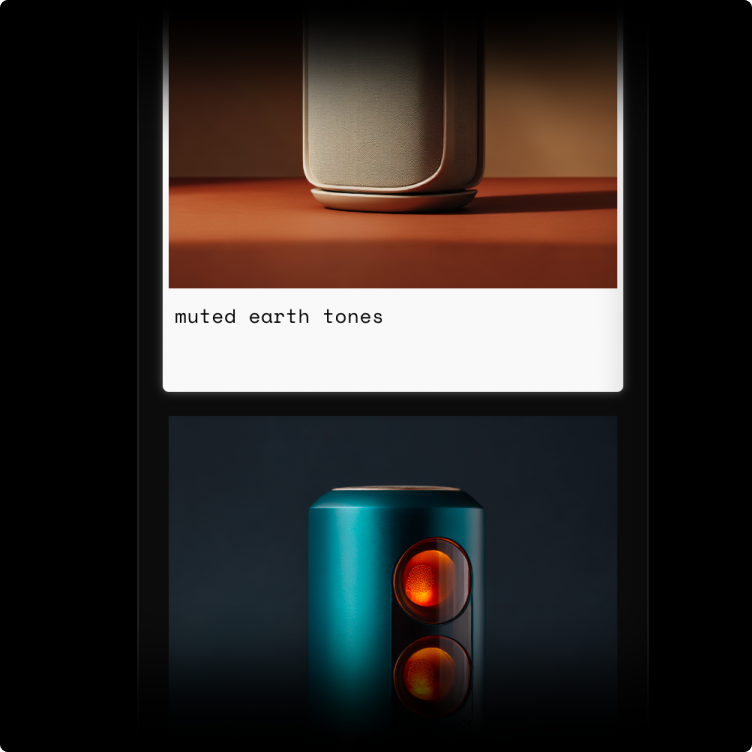

Token Visuals

Every token in the database has a reference image generated directly from that token. You’re not reading a description and imagining the result. You’re looking at it. Pick what you want. Skip what you don’t.

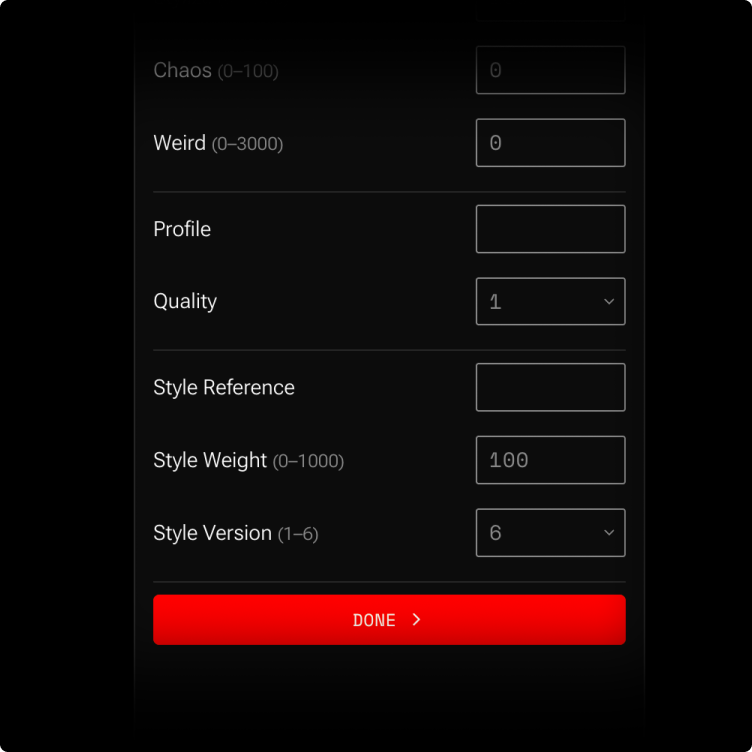

Parameters

The final step is yours entirely. Aspect ratio, version, style weight — the variables that no token can decide for you. Set them once for a project, or change them every run. The prompt doesn’t close until you’re ready.

Practical Examples

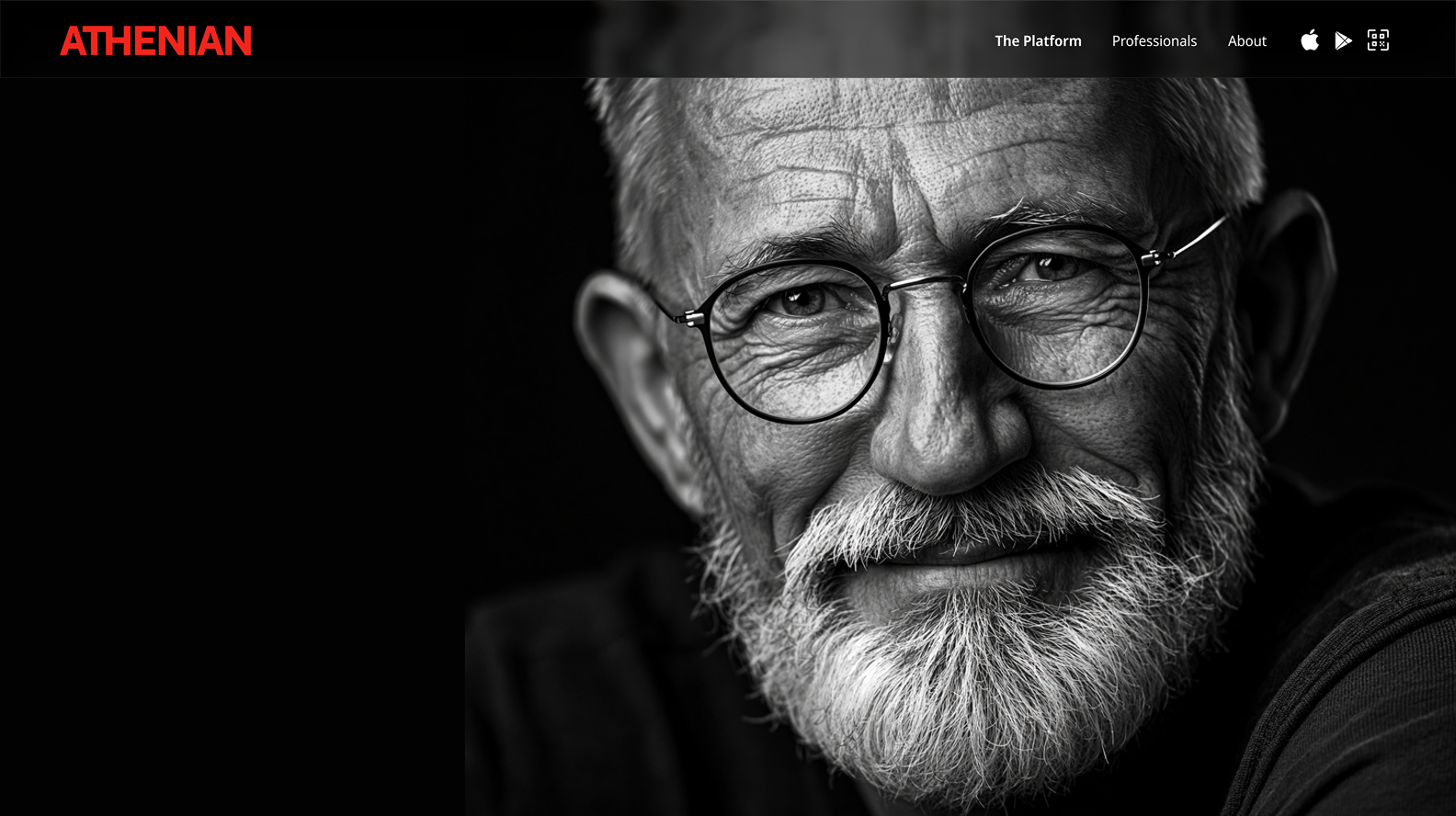

A hero theme is one of the most repeatable problems in product design. Every new venture needs one image that carries the whole idea without a word of explanation.

Athenian was a marketplace where knowledge professionals could trade expertise at a granular level. Not consultancy, not courses — specific insight exchanged between specific people. The image needed to feel cerebral and human at the same time. Nothing in a stock library comes close to that combination.

Diffusion Journey turned that brief into a sequence of deliberate token choices. The same prompt structure, adjusted slightly, generates an entire image family: hero, feature illustrations, social assets.

A wise good-looking 50-year-old man with a trimmed white beard wearing glasses, confident subtle smile, looking straight at the camera, isolated black studio background, dramatic studio key light with deep shadows, high-contrast grayscale, realistic studio portrait photograph —raw —ar 16:9 —v 8.1

UX designers are often asked to make personas feel real. Not a demographic profile on a slide, but a person you could picture walking into a room.

For Athenian, the personas needed to reflect a specific kind of professional: knowledgeable, independent, a little unconventional. Diffusion Journey let me define that character type through tokens and generate a consistent image family across multiple personas. Same lighting logic, same visual style, different faces.

A 26-year-old British junior analyst with short dark messy hair and modern stylish glasses, candid energetic mid-conversation smile glancing off-camera with undeniable presence, rolling up his shirt sleeves, modern office space, studio HDRI lighting, candid editorial photograph, moment-in-the-life portraiture, tack-sharp focus on the subject’s eyes, tailored smart-casual shirt —ar 4:5 —v 8.1

A 50-year-old man with grey hair, a neat grey beard, and practical glasses, quiet confidence in deep concentration, smartphone held in his hand, seated head down intensely focused on the screen with his index finger tapping mid-tap, train carriage during commute, soft ambient natural light through the carriage window, editorial photograph, dark grey tailored suit jacket with a subtle patterned tie —ar 4:5 —v 8.1

Social media campaigns live or die on visual consistency. Every post needs to feel like it belongs to the same world, without looking like it came from the same template.

For a preloved luxury bags business, the brief was about desire and restraint in equal measure. The product is high-end but second-hand, so the images needed elegance without excess. Diffusion Journey made it straightforward to define that visual register once and generate across it repeatedly.

A young woman in a beige long-sleeved top laughing in a car, candid unguarded laugh with her eyes obscured by tousled hair, an architectural cream-coloured top-handle leather handbag with clean minimalist lines, smooth grain leather, and discreet polished gold hardware resting half-tucked beside her on the seat, right hand adorned with stacked rings raised near her forehead with her left arm bent reaching upward mid-laugh, car interior with light fabric ceiling and textured brown leather upholstery, warm soft natural daylight through the car window, spontaneous unguarded joy, 35mm film editorial photograph, candid lifestyle campaign moment-in-the-life, shallow depth of field with the subject tack-sharp and the bag falling into soft background blur, medium close-up at passenger-seat eye level on an 85mm lens —ar 4:5 —v 8.1

A young woman in a crisp white shirt and dark navy tailored trousers with a genuine joyful smile and charismatic enigmatic presence, classic structured-leather handbag without visible branding held in her lap with her hands wrapped around most of it so only the edges are visible, head slightly turned caught mid-glance, sunlit warm-toned interior of a quiet café, warm soft natural daylight through a window, spontaneous unguarded joy, 35mm film editorial photograph, candid lifestyle campaign moment-in-the-life, shallow depth of field with the subject tack-sharp and the bag in soft foreground focus, telephoto medium close-up on an 85mm lens —ar 4:5 —v 8.1

Presentation decks rarely get the visual treatment they deserve. Slides either lean on stock photography that feels generic, or go without imagery altogether.

For a pitch around low-cost off-season tourism in Greece, the imagery needed to do real persuasive work: make an empty-season destination feel like the better choice, not the compromise. Diffusion Journey let me build a consistent visual language for the deck. Same atmospheric light, same sense of quiet arrival, across every slide.

A picturesque traditional Greek stone-built mountain village with slate-roofed houses nestled into a forested mountainside, stone bell tower rising among terracotta chimneys, lush evergreen pine and chestnut forest with snow-dusted peaks rising behind the village, soft winter haze drifting between the buildings and the mountain, warm soft winter morning light spilling from the village’s open doorways and lanterns onto the paved path ahead, warm earth tones against deep evergreen and pale haze blue, irresistible pull to follow the path wherever it leads, editorial travel photograph, tourism campaign hero image, eye-level first-person view stepping onto a worn paved stone path winding deeper into the village and disappearing around a bend, foreground paved path occupying the lower half of the frame curving inward and drawing the eye toward the unseen turn —ar 16:9 —v 8.1

Under the hood

Diffusion Journey is built in Astro with Tailwind. The token database lives in a Google Sheet for now. Keeping it in a spreadsheet means the database stays editable, shareable, and easy to extend without touching the codebase.

Token collection runs on two pipelines. The first uses a clip interrogator: feed it an image that inspires you and it returns the prompt vocabulary that would most likely produce it. The second scrapes X for trending Midjourney prompts using an automated agent, extracts candidate tokens, and flags them for review. Nothing goes into the database unverified. Every token earns its place with a reference image generated from that token alone.

Reflections

Diffusion Journey works best when you don’t quite know what you want. The sequence gives you somewhere to start, and the token visuals do the rest. You discover the image by moving through the categories rather than arriving with a fixed idea. That turns out to be a genuinely useful mode — not just for Midjourney, but for the early stages of any visual brief.

The limitation shows up at the other end. When you know exactly what you want, the sequence can feel like a detour. The structure that helps you find an idea becomes friction when the idea is already fully formed.

That tension is what the next version is for. A faster path for experienced users — fewer steps, more direct control, the same structured vocabulary underneath. The database stays. The guardrails come off.